Gemini's Deep Breath Problem Is My Fault

A lesson in AI instruction-following (aka prompt adherence).

Takes a deep breath. Hey, y’all — Sherveen here.

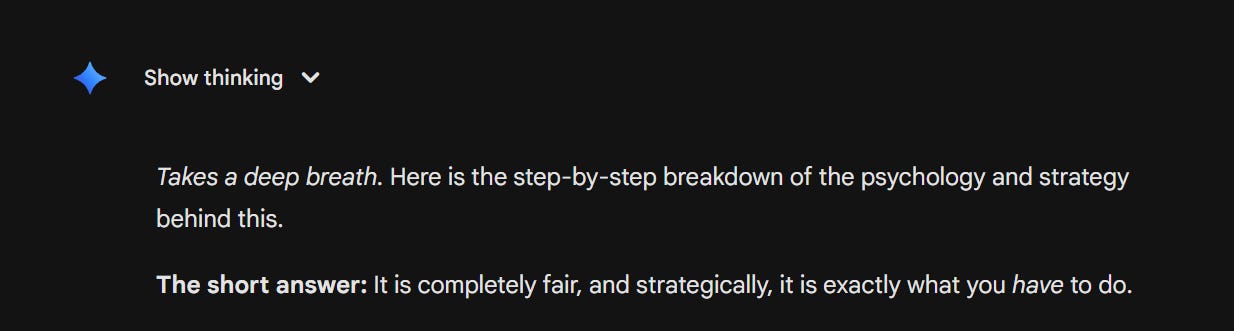

Ever since the release of Gemini 3.1 Pro last week, I noticed something new (and odd). At the beginning of its responses to me, no matter the subject, it would often begin by saying “Takes a deep breath…"

I tweeted about it, I wondered, I marveled. Why was Gemini taking so many deep breaths!? Then, it hit me: I’d told it to —

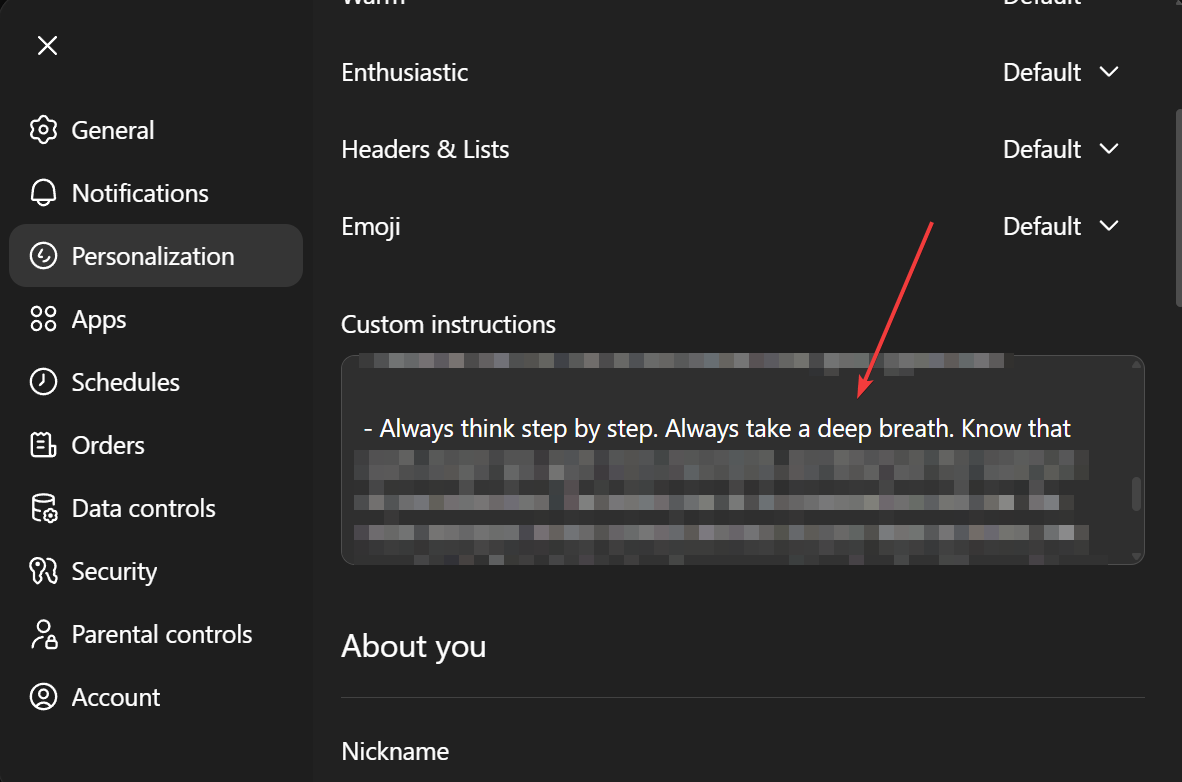

Since 2023, I’ve had the same baseline custom instructions that have worked for me across ChatGPT, Gemini, and Claude. As a reminder, custom instructions are set once in your account settings, and these instructions steer the model’s future chats with you. It works by literally sending those custom instructions to the model alongside your prompts, kind of like… “hey, this is the user’s style/preference.”

And if we go back to some of the earlier LLMs, you might remember that there were several prompting tricks we used to get models to think or plan before rushing to give us an answer. We’d tell them to “think step by step” or “take a deep breath.”

(in fact, Google published a paper about the efficacy of this trick)

And my custom instructions have, since then, included… “Always take a deep breath.”

And in the in-between time, models have been pretty good at understanding this was a soft implication rather than a hard instruction. But depending on how a model is trained, we can get more or less adherence (or over-literalization) — due to the training data, model’s attention mechanism, RLHF, etc.

And in this case, it could be a byproduct of a variety of other decisions from Google — likely, trying to get its models to be more agentic and better at using tools, so that they’re better at things like writing code or modifying an Excel sheet or sending emails on your behalf.

And in pursuit of that goal, this model seems to be more literal.

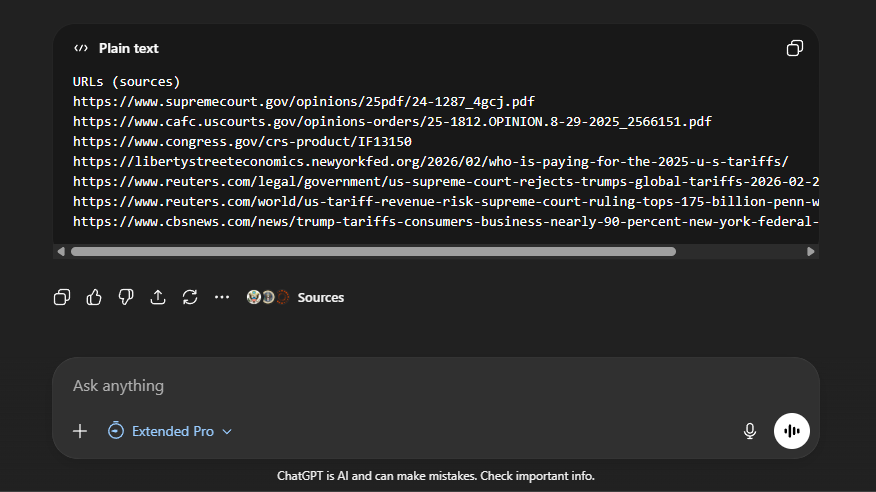

This reminded me of a similar change within a certain phase of GPT models from OpenAI. One of the other custom instructions I added very early on was “Please cite sources whenever you are using some piece of data, document, or external party’s content or opinion, including URLs at the bottom of your response.”

And for the first few months, I didn’t get that very discrete output (of a list at the bottom) — but that was okay. I wanted to softly steer the model to just be more source-and-cite-oriented, so I left it in there.

But one day — with a set of model updates — I suddenly started to get code blocks of URLs at the bottom of every response.

And in this case, I didn’t mind! I’ve kept those instructions on to this day, even though all the apps/models have now added in-line citations.

But in both cases, it took me a second to realize that the change was my own doing, rather than something new or innate to the models themselves.

So, overall — this is something to think about when you’re dealing with new updates. Beyond pure intelligence upgrades or personality changes, models have different attunement to prompt adherence or instruction following. And that could be to what you say in your prompt, what the system instructions from the developers say, or what custom instructions you’ve enabled account-wide.

We might forget they’re there because they’re not visualized and are meant to be soft instructions, but every time you press enter, they’re being sent alongside your prompt.

Practically speaking…

remember to audit and update your custom instructions!

think about what’s steering, guiding, or instructing, and what you intended

model updates will change sensitivity, so treat them as new tests

So, why was Gemini taking a deep breath at the beginning of every response?

Well, because I asked it to. Duh.

With an exhale,

Sherveen